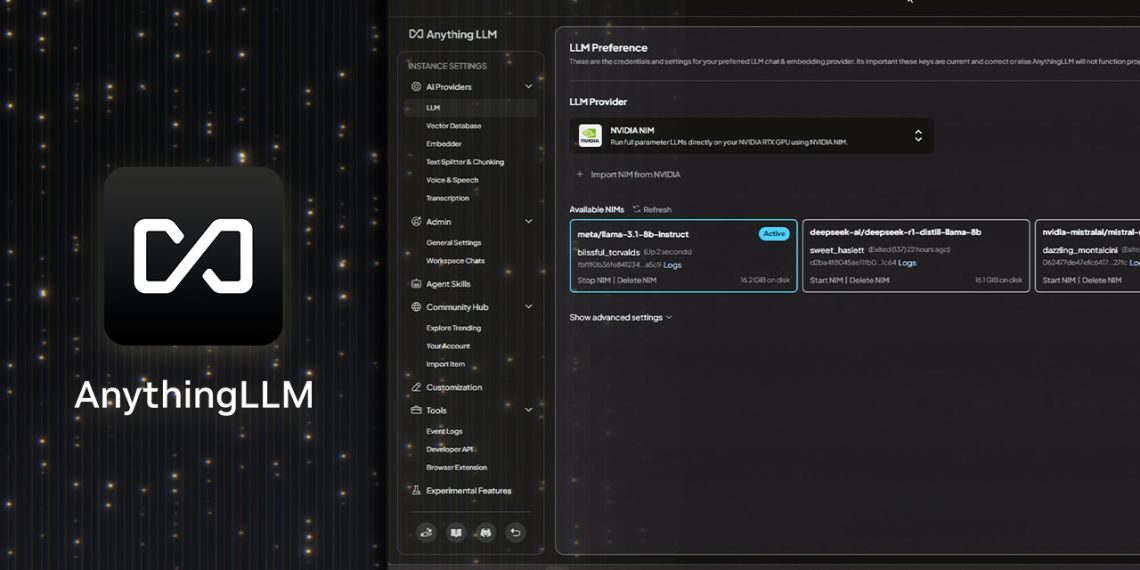

AnythingLLM with NVIDIA RTX is transforming how users run large language models. This powerful desktop app allows AI enthusiasts to deploy local LLMs with ease and privacy — directly from their PC. With new support for NVIDIA NIM microservices, the app now offers faster, more responsive performance on GeForce RTX and NVIDIA RTX PRO GPUs.

AnythingLLM is designed for users who want control over their AI workflows. It enables tasks like answering questions, summarizing documents, analyzing personal files and running agentic actions. Users can connect models such as Llama 3.1 and DeepSeek R1 to their own data, making the app extremely versatile. Additionally, it supports file types like PDFs, Word docs, and entire codebases.

The platform bridges the gap between open-source and cloud models. It works with locally hosted LLMs and integrates with APIs from OpenAI, Microsoft and Anthropic. Users can expand its capabilities using “skills,” available through a growing community hub. These extensions allow for more task automation and richer interactions.

Installing AnythingLLM is simple. With just one click, users can launch it as a standalone app or a browser extension. There’s no need for complicated setup or technical adjustments. For users with RTX-powered systems, the benefits go further. Tensor Cores built into these GPUs accelerate AI operations, leading to significantly faster results.

Performance is also enhanced by support for Ollama, Llama.cpp, and GGML. These tools optimize LLM execution, using NVIDIA’s architecture to its full potential. In benchmarks, GeForce RTX 5090 delivers 2.4x faster inference than Apple’s M3 Ultra — especially with models like Llama 3.1 8B and DeepSeek R1 8B.

Support for NVIDIA NIM microservices pushes usability even further. NIMs are prebuilt, performance-tuned containers that include everything needed to deploy a generative AI model. There’s no need to download model files or configure pipelines manually. Instead, developers can run NIMs locally or move them to the cloud without friction.

By integrating NIMs directly into the AnythingLLM interface, users can test them immediately. They can also connect NIMs to ongoing workflows, or link them with NVIDIA AI Blueprints for full project integration. This reduces friction and speeds up experimentation.

These new features turn AnythingLLM into more than just a chatbot interface. Users can build agents, automate tasks, and explore multimodal AI functions from a single tool. The RTX hardware delivers the performance, and NIM services simplify the experience.

For ongoing learning, the RTX AI Garage blog showcases community innovations weekly. From productivity apps to digital humans, users can explore what’s possible with RTX-powered AI PCs and workstations.

In summary, AnythingLLM with NVIDIA RTX provides unmatched flexibility, privacy and performance for AI enthusiasts. With local LLM support, agent tools, and access to optimized NIM services, it’s an ideal solution for developers and creators ready to push the boundaries of local AI.

READ: NVIDIA RTX Powers DaVinci Resolve 20 and FLUX.1 AI Tools