New Agentic Intelligence Tools for Computer Vision

AI Video Analytics is redefining how organizations process and understand video by combining multimodal reasoning with advanced agentic intelligence. Current computer vision systems capture what happens in physical spaces, but they often struggle to explain why events matter or what could occur next. Because most industries rely on quick interpretation, teams need systems that add context, generate insights and answer complex questions. Vision language models help solve these challenges by connecting text descriptors with spatial and temporal information.

Major Advances Driving Smarter Visual Intelligence

Traditional CNN systems detect objects and events but lack broader semantic understanding. They identify what is visible but cannot translate video scenes into structured text. VLMs address this gap through dense captioning, which creates rich metadata for flexible search and deeper analysis. Organizations use these captions to analyze patterns, identify changes and reduce manual review. Because VLMs map visual input to language, they help teams understand evolving conditions across long periods.

Dense Captions Enhance Searchable Visual Content

Businesses gain significant value by integrating VLMs into video platforms. UVeye processes hundreds of millions of vehicle images each month and uses VLMs to convert them into detailed condition reports. This improves accuracy and reduces inspection time. Relo Metrics takes a similar approach to sports marketing by understanding the context of brand exposure rather than just detecting logos. These insights help companies measure real-time performance and adjust strategies for better returns. Moreover, context-rich visual data improves decision-making and strengthens operational clarity.

Read Also

GeForce NOW adds Dying Light to Arena

NVIDIA Shield TV receives another update rollout

Adding Contextual Reasoning to System Alerts

CNN-based systems often deliver binary alerts without context. This can lead to errors or incomplete understanding of incidents. VLMs enhance alerts by adding reasoning, describing where an event occurred and why it is important. Linker Vision uses this approach for smart city applications across thousands of camera feeds. The system verifies events such as accidents or storm damage and coordinates responses across traffic control, utilities and emergency teams. Because the alerts carry context, cities can react faster and reduce operational risks.

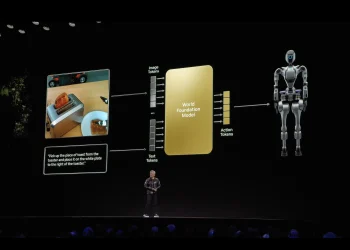

Agentic AI Enables Deeper Scenario Analysis

AI Video Analytics becomes even more powerful when VLMs are paired with reasoning models, LLMs, RAG and speech transcription. This combination processes large video archives, answers complex queries and summarizes long sequences. Basic VLM integration is useful for short clips, but agentic architectures allow full-scene understanding across time and modalities. These systems support inspections, safety reviews and infrastructure analysis by delivering deeper insights than traditional pipelines.

Real-World Use Cases for Automated Inspection

Levatas demonstrates the potential of agentic AI by automating infrastructure inspections. Their systems analyze video from mobile robots and autonomous drones to identify thermal issues, equipment damage and structural risks. For utility companies like AEP, these tools accelerate inspection workflows and improve reliability. Alerts are sent instantly when problems arise, ensuring timely response. Because the agent can review long videos autonomously, human teams can focus on priority tasks rather than manual scanning.

Tools Powering the Next Generation of Video Intelligence

NVIDIA provides models such as NVCLIP, Cosmos Reason and Nemotron Nano V2 for multimodal search and reasoning. Developers can integrate VLMs through the NVIDIA Blueprint for video search and summarization, which offers event reviewer features for easier deployment. For more complex use cases, developers can combine VLMs with LLMs, RAG and computer vision models to build powerful AI agents. This flexibility enables accurate analytics and real-time process understanding that scales across industries.

The Growing Impact of Agentic AI in Vision Systems

AI Video Analytics is transforming how organizations interpret visual data by enabling deeper reasoning, clearer insights and faster responses. With dense captioning, improved alerts and agentic architectures, teams can move beyond basic detection and toward full-scene understanding. As more developers adopt NVIDIA’s tools and multimodal systems, video intelligence will continue evolving into a strategic asset that improves safety, efficiency and decision-making.