Foundation models represent a paradigm shift in AI, offering versatile, large-scale neural networks that can be adapted for a variety of tasks. From natural language processing (NLP) and computer vision to scientific research and robotics, these AI models have transformed how machines understand, generate, and process data.

The Stanford AI Index Report 2024 recorded 149 foundation models published in 2023, more than double the number released in 2022. This rapid growth underscores the significance of foundation models in AI development.

Definition: What Are Foundation Models?

A foundation model is a large AI neural network, trained on massive datasets, typically using unsupervised learning, that serves as a general-purpose base for multiple applications. Unlike traditional AI models that require task-specific training, foundation models can be fine-tuned for a variety of domains, making them highly adaptable.

Key Characteristics of Foundation Models

✔ Massive Training Data – Typically trained on unlabeled datasets consisting of text, images, audio, and video.

✔ Generalized Learning – Unlike task-specific models, foundation models can be adapted for various applications.

✔ Self-Supervised Learning – Learn patterns without requiring manual labeling, saving time and cost.

✔ Scalable & Efficient – Can be fine-tuned with additional data for improved accuracy and reliability.

🔍 Example: GPT-3, a foundation model developed by OpenAI, was trained on nearly a trillion words and contains 175 billion parameters, enabling it to perform multiple NLP tasks like text summarization, translation, and code generation.

The Evolution of Foundation Models

1. Early AI & Neural Networks (1950s–2010s)

The first AI models were task-specific, requiring large amounts of labeled data and designed for narrow applications like image recognition or speech-to-text conversion.

2. The Rise of Transformers (2017–2020)

📌 2017: The Transformer model (Vaswani et al., Google Brain) introduced self-attention mechanisms, improving AI’s ability to process long-range dependencies in text.

📌 2018: BERT (Bidirectional Encoder Representations from Transformers) revolutionized NLP by enabling contextual understanding of words.

📌 2020: GPT-3 (Generative Pre-trained Transformer 3) demonstrated unprecedented capabilities, generating human-like text.

3. The Generative AI Boom (2020–Present)

✔ ChatGPT (2022) – Attracted 100 million users in 2 months, marking AI’s mainstream adoption.

✔ Diffusion Models (2022–2023) – Text-to-image models like MidJourney and Stable Diffusion exploded in popularity.

✔ Multimodal AI (2024) – Models like Gemini Ultra and Cosmos Nemotron can process text, images, video, and audio simultaneously.

Types of Foundation Models

🔹 Large Language Models (LLMs) – Examples: GPT-4, Llama, Claude

🔹 Vision-Language Models (VLMs) – Examples: CLIP, DALL·E, Cosmos Nemotron

🔹 Diffusion Models – Examples: Stable Diffusion, MidJourney

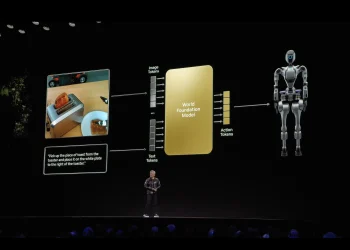

🔹 World Foundation Models – Simulate physical environments for robotics, autonomous systems, and digital twins

How Foundation Models Work

1. Training Process

Foundation models learn from raw, unlabeled data, using self-supervised learning techniques. Transformers, the dominant architecture, use self-attention to understand relationships between data points.

2. Fine-Tuning for Specific Applications

Once trained, foundation models can be fine-tuned with domain-specific datasets to improve accuracy and performance for specific tasks.

📌 Example: A general medical AI model trained on millions of health records can be fine-tuned to specialize in breast cancer detection.

Applications of Foundation Models

Foundation models are reshaping multiple industries, including:

1. Natural Language Processing (NLP)

✅ Chatbots & Virtual Assistants – AI-powered customer support & content generation

✅ Translation & Summarization – LLMs like Google Gemini and GPT-4 enable real-time translations

✅ Content Creation – AI-generated news articles, reports, and scripts

2. Healthcare & Drug Discovery

✅ Medical Image Analysis – AI models trained on MRI, X-ray, and CT scan datasets

✅ Genomic Research – Evo 2, a biomolecular foundation model, predicts protein structures

✅ Personalized Medicine – AI-driven drug design & disease prediction

3. Autonomous Vehicles & Robotics

✅ Self-Driving Cars – AI models trained on millions of driving hours

✅ Humanoid Robots – World foundation models simulate real-world physics for robotics

✅ Smart Factories – AI-powered automation for manufacturing

4. Generative AI (Text, Images, Video, Music)

✅ Image Synthesis – Models like DALL·E and MidJourney generate photo-realistic images

✅ Video Creation – AI generates animations, deepfakes, and realistic simulations

✅ AI-Generated Music – Neural networks compose original music

The Future of Foundation Models

As AI research evolves, foundation models are becoming larger, faster, and more intelligent. Some key trends shaping the future include:

🔹 Multimodal AI – Models will process multiple data types (text, images, audio, video) seamlessly.

🔹 World Foundation Models – AI will simulate real-world environments for autonomous machines and robotics.

🔹 Edge AI & Real-Time Processing – Foundation models will be optimized to run on local devices for instant decision-making.

🔹 AI Safety & Ethics – Addressing bias, misinformation, and ethical concerns in AI-generated content.

📌 Example: NVIDIA Cosmos world foundation models use 20 million hours of driving and robotics data to train safe, efficient AI-powered robots.

Challenges & Ethical Considerations

Despite their potential, foundation models pose challenges, including:

🔴 Bias in Training Data – AI models can amplify biases present in datasets.

🔴 Misinformation Risks – Generative AI can produce misleading or fabricated content.

🔴 Intellectual Property Issues – AI-generated content raises legal concerns over ownership and copyright.

🔴 Environmental Impact – Training large AI models requires massive computational power, increasing carbon emissions.

🛠 Solutions Under Development:

✔ Filtering AI-generated content for accuracy & bias detection

✔ Developing regulatory frameworks for ethical AI deployment

✔ Optimizing AI models to be more energy-efficient

Conclusion: The AI Revolution Continues

Foundation models have redefined AI, transforming how machines learn, reason, and interact with the world. With applications spanning NLP, healthcare, robotics, and generative AI, they are shaping the future of science, industry, and everyday life.

🚀 As AI research progresses, foundation models will continue to evolve, unlocking even more groundbreaking capabilities.

🔗 Stay updated with NVIDIA’s latest AI advancements at nvidia.com.